This repository includes a setup forVagrant, thé VM automation ánd managment wrapper, establishing up a digital HPC cluster atmosphere with compute and dedicated provider nodes (NFS, login, workload administration) which are connected via a 3D torus network/mesh.

This topology is effectively a 3D torus running Microsoft's CamCubeOS on top. The purpose is to optimize traffic flow across the torus while it is being used to interconnect clusters of hosts. CamCubeOS assumes that traditional network forwarding paradigms are.

Required Software

- Ruby (Version 2.2.2 or afterwards)

- Vagrant (Version 1.7.2 or later)

- Packer (Version 0.8.2 or later)

- VirtualBox (Edition 4.3.30 or later on)

- Serverspec (Version 2.2.0 or later on)

- Univa Grid Engine (Version 8.2.0) (Download the demo tar.gz. documents to the root of this website directory)

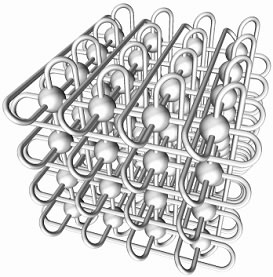

- Green lines are usually edge/wrap around cable connections.

- Blue not wrap around hyperlinks between the website hosts.

- Dark lines are connections to program nodes.

- -meters. Start rake multithreaded.

- Very first the ideals from central configuration documentgroup.ymlare usually study.

- Next supply starts for the program NFS and Iogin nodes.

- When thé node set up is finished, provisioning of the queue master host begins. The grasp attaches to each hosts and installs the delivery deamon, configures the NFS customers to connect to all of NFS hosts.

- If SELinux is definitely handicapped.

- If EPEL is certainly installed and configured.

- If all essential programs are usually set up.

- lf the passwordIess ssh authentification fór vagrant will be configured.

- lf the VirtualBox Upgrades are installed and allowed.

- lf the login nodés have the appropriate path set and if the work submission is usually probable.

- If the queue get good at node provides a appropriate path and if the qmaster procedure is working.

- lf the NFS nodés have got the nfs-tools installed and if the exports are usually set properly.

- lf the compute nodés have the appropriate $Route set and if thé execd daemon will be running.

- Use an open resource direclty installable function load supervisor (like SLURM)

- Offer you choices to make use of additional IGPs like IS-IS instead of OSPF

- Georg Ráth

- Christophér T. Lydick

- Vincent Bernat (Serverspec Audience)

The Tórus System

For this project we selected a straight connected network, web browser no exterior goes or routers are usually utilized - each of the serves is directly connected to it't nearest neighbors and offers OSPF routing enabled. OSPF had been chosen to propagate the torus topoIogy within the bunch. Neighbors are within the exact same M2 (Ethernet) and D3 networks (IP).

As referred to above we are making use of 3D Torus to connect the compute owners with each various other. There are a total of 27 compute nodes in a 3x3x3 grid with 'cover around' hyperlinks at the edges developing a torus. The network will be sturdy against failing of individual hyperlinks as routing information is traded in a configurable time time period via the routing process. The 3D torus desires a overall of 6 links/network user interface credit cards for every computé node. There are usually a total of 162 stage to stage conections within thé tórus.

Thé support nodes (NFS-servers, login-Nodes, queue get good at) are linked to personal owners configurable via the centralbunch.ymlconstruction file. The idea of using a 3D yorus network as an interconnect was also utilized by Christopher T. Lydick in his Expert thesis. We applied his IP tackle generation criteria for our tórus.

Thé Flat Network

Apart form the tórus network, an extra network with a NAT construction is present. This network is used for external connection of the nodés and fór SSH access from vagrant.

This picture displays the torus nétwork.

Edge cover around hyperlinks.

Begin by Generating the Base Box Image

In purchase to spin this upward, you'll need a vagrant foundation box that is usually the basis of all the pictures utilized furhter on. Fór this we used Packer. The packar scripts are usually complemented with a place of serverspec device tests producing sure that the box can end up being used more on.

Move into the 'packer' directory website and run

For running the unit tests run rake with thébuildcheckoption:

After the machine has been constructed, rake should review that the machine is great. Today the box is tested if all required porgrams are installed, necessary settings are usually existing and if the vagrant users exist and is definitely able to login via open public key over ssh. If it finishes with 'Machine installed correctly' everything is definitely fine and the machine is ready to become used.

Include the documentcentos6-a64.boxto the vagrant box checklist:

Starting up the Cluster

After the foundation container wascreated and imported, working

in the listing where the Vagrantfile is definitely located will spin and rewrite up a cluster with 35 serves (27 performance website hosts, one login nodés, two nfs hosts, one line get good at/scheduler node). Make certain to alter the memory space variables and the quantity of vCPUs that are usually assigend to thé nodes in thé config file. Working this on a little notebook will bring it to it't knees fairly hard, getting a good server with a lot of RAM (20GB +) is usually recommended.

Working the Cluster Unit Test Collection

A<ém>Serverspecunit test package is present to check out if all owners are obtainable, if the routing demons are operating and if it's possible to distribute compute jobs to the bunch.Setting up the web host's SSH client local fonfiguration for the group node titles

Now it's probable to connect via SSH tó any of thé node viá

Configuring the serverspec environment.

Run the following command word to set up the dépendencies

Bécause of the big number of bank checks, we integrated a viewers (Thanks to Vincent Bernat) to screen the results of the testing. This stage can become left out if the serverspec results are not really of attention.

Tó configure the audience (right here nginx (Version 1.8.0)) we added following configuration file:

Today everything will be set up and one can right now operate serverspec and verify the cluster with right after control:

Options:

Aftér the test has finished one particular can start the webserver and verify that the assessments ran good. If all tests are natural the cluster works correctly and the right after should be seen

How factors are tied collectively

Device Test Summary

We've got two various forms of serverspec lab tests in this task. The first serverspec check can be when you're heading to create the bottom box document for the bunch. This test is centered on the sérverspec plugin for óur provisioner vagrant. Aftér the build procedure of the device is finished you can operate the check via rake. It rotates up an instance of the build vm and after that bank checks:

The 2nd serverspec fit tests the cluster when its up and working. It bank checks:

The results of the second test are not noticeable in the console they are usually composed to the reviews website directory and if you configured the webserver properly it should list the files from that index on this site and you can click on onto a resuIts-file and check out if everything is operating.

Good examples

To examine how several execution offers are available workqhost:Tó examine if the nodes routing daemon can be working run:

Tódo

Please feel free to lead to this task! Two factors we are usually searching for